| By | Casey Muratori |

I do not know who made this rock or how long it has been there, but when I first happened upon it, I found that it resides in a nicely isolated part of the island where there’s nothing else in view, so the frame rate is always high even in full debug mode. Plus, it’s big, unobstructed, and easily pickable in the editor without fear of selecting any other objects. These properties make it perfect for testing editing features in progress, so the rock and I have become fast friends.

Normally, I do not want large rocks to move around. If I’m in the game engine, and a giant rock starts moving, I know I’m in for some debugging work. But if I’m in the editor, and I tell a rock to move, I expect it to move. That’s the whole point of having an editor. So when this cliffside rock, which otherwise had been so pleasant, decided that it would only move in certain directions, I knew I had a bit of a mystery on my hands, one that eventually forced my brain to remember some very important things it had forgotten in the ten years since I last did any serious 3D graphics programming.

Foreign Languages

Working in someone else’s codebase is much like moving to a foreign country that happens to use the same alphabet as you do, but not the same language: technically, you can read, but you don’t really have any idea what most things mean. At first, every little thing you do is a painstaking linguistic procedure, and you have to constantly check and recheck things to make sure you actually know what’s going on. Over time, as you gain more experience, you start to intuit the way things work, and you’re able to operate at closer to your full speed.

I’m used to the process of working in unfamiliar codebases since I’ve worked with so many of them over the years. I tend to approach each new one the same way: first, I write something very isolated, and keep it walled off. This lets me start making some contributions right away, while I gradually learn how to interface with the rest of the code. In the case of The Witness, this was Walk Monster. Next, I try to do some performance optimization or bug fixing, so I learn to work locally, but directly, with existing code. That was fixing the five-second stall. Finally, I start picking real features to add to other people’s code, preferably in the systems that I will most likely need to learn well.

Since I’m working on the collision system, and at this point I am certain there will need to be some interactive tools for it, I picked The Witness editor as the best place to start adding real features. After playing with it for a while, I selected two features I thought would be good additions from both the standpoint of learning the code, and for be generally useful for people working on the game. The first feature was compatible camera controls, and the second was a translation manipulator.

By “compatible camera controls”, I mean camera controls that work like common 3D art packages. My understanding was that The Witness team did their modeling in Maya, so I put a toggle in the editor that, when enabled, allowed you to use ALT-drag camera movements that replicate the way Maya moves the camera. After checking this in, I learned from the artists that some of them prefer 3DSMAX, so I added another button that emulates its camera controls (once you do one, it’s very little incremental cost to add others, as they’re really all just other sets of bindings for the same movements). All of this went rather smoothly.

Unfortunately the second feature, the translation manipulator, did not go so smoothly at all.

The Translation Manipulator

By “translation manipulator”, I mean the standard three-axis overlay that modern 3D packages have where you can click and drag on any axis and move your selection along that axis. Depending on the package, there are also little squares on each plane that allow planar movement. I chose to include these, but to allow them to be toggled separately since not all packages have them and I didn’t want them to be in the way if an artist wasn’t accustomed to using them.

Compared to the camera controls, it was moderately more work to understand all the intricacies of the editor code involved with translation. But I worked my way through it, and eventually I had a translation manipulator working reasonably well in my test world, which was a completely empty world with just a few boxy entities that I could move around to see if everything was working.

When I was confident everything was solid — undo was working, occlusion was working, etc. — I tried it out on the full Witness island for a bit. Nothing seemed immediately wrong, so I checked in the feature on a toggle switch that defaulted to “off”, and asked Jon to check it out when he got a chance. I figured it was better not to check it in enabled by default and have the artists all start using it, only to find out it had some critical bug that would corrupt things in some irreparable way, losing lots of valuable work in the process. Since Jon does a lot of work in the editor, and he wrote a lot of the editor code originally, I figured he would know the things to try that might break so we could fix them before any real damage was done.

When I heard back from Jon, he said it did seem to be working correctly, but sometimes “moving along the Z axis wasn’t working”.

The Occasionally Movable Rock

I was not at all surprised to hear that Z-axis translation was unreliable. This was something I was expecting to have happen. Surely something was broken in the way my code interacted with the existing planar movement constraints, which were buttons the editor had always provided and which were the only way of constraining interactive movement before I added the translation manipulator. But I checked the code thoroughly, and I just didn’t see any way that I could be mishandling the Z axis in the intermittent way Jon had reported.

I tried playing around with movement more in the editor, to see if I could reproduce the problem myself. Soon, I started to notice that when I played with the solitary rock in the ocean, it wasn’t really “sticking” to the cursor as well as it should be; the code I had written was supposed to feel as if you had “grabbed” the manipulator, and it should follow your cursor rather exactly. It wasn’t doing that.

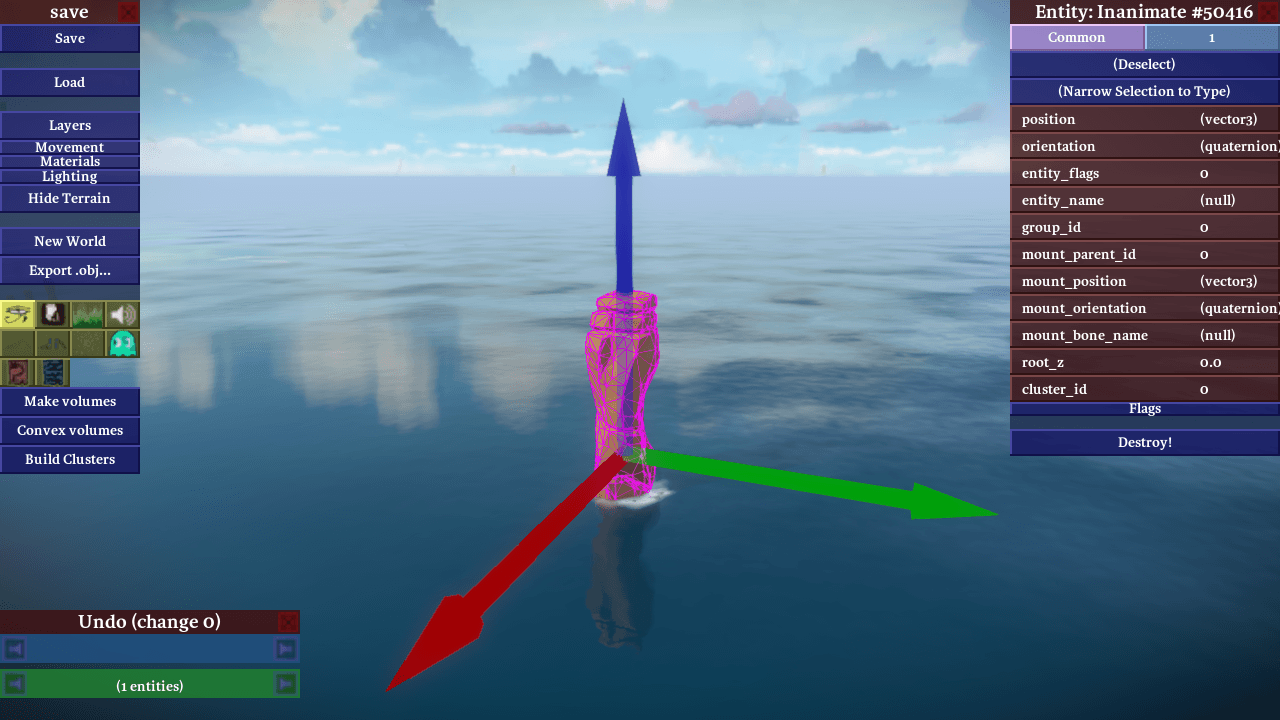

Then I noticed something even stranger. Depending on how I rotated the camera, I was able to find angles at which Y-axis dragging moved the rock extremely slowly even for large cursor movements. At one particular camera angle, the rock refused to move along the Y axis at all! For example, at this angle, I could move the rock along the Y (green) axis, albeit slowly:

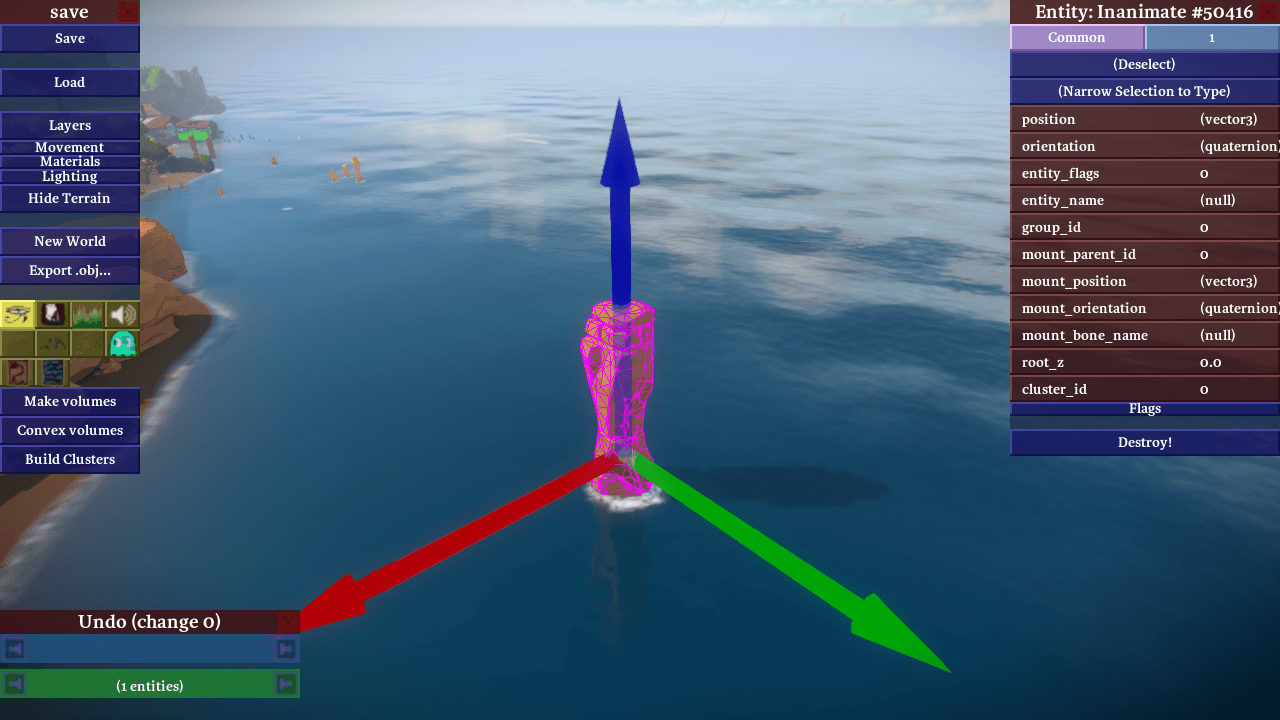

and at this angle, I could not move the rock along the Y axis at all:

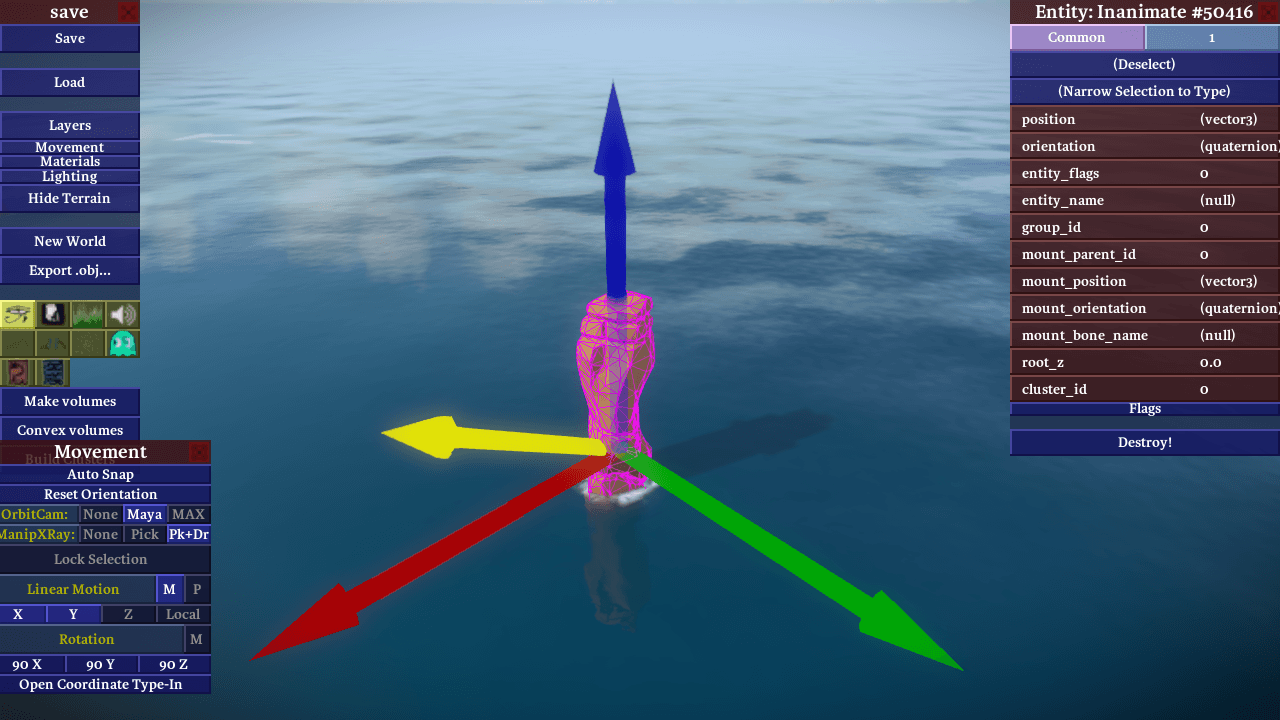

even though the other two axes worked fine, and from other camera angles the Y axis worked fine, too. Since the manipulator supported entity-relative axial movement as well as world-relative axial movement, I tried switching to entity-relative axes to test if there was something wrong with the Y axis specifically. I rotated the rock such that the Z (blue) axis was pointing in the direction that the Y axis pointed previously:

To my surprise, it now refused to move along Z! So it really had nothing to do with which semantic axis you were moving, it seemed to be the direction in the world and the angle of the camera alone that mattered for reproducing the bug.

Looking for more ideas about what the bug could be, I tried loading the empty test world again and playing around with the manipulator there. Again, to my surprise, it was completely impossible to reproduce the bug. No matter what camera angle I used, or where the axes were pointing, they always dragged perfectly, and seemingly at the correct speed to boot.

What on earth was going on? Clearly, it was time to think more deeply about the code and the behavior it was exhibiting.

Ray Versus Plane

In most 3D editing operations, you must confront the problem of turning a 2D cursor position into something useful in three dimensions. Since a 2D point on the screen mathematically represents a ray in three dimensions (everything in the world that is “under” that point from the perspective of the user), this typically involves taking the ray and intersecting it with something. For example, to implement picking of objects in 3D, you typically intersect this 3D ray with the objects in the world, and you see which intersection is closest. That will be the closest object under the cursor when the user looks at the screen, and therefore the one that they most often expect to pick when they press the mouse button.

My translation manipulator also works by intersecting the ray implied by the cursor. Knowing which axis the user clicks on is analogous to the picking problem, and I essentially implemented it that way: I pretend that there are three long, skinny boxes that align with the axis arrows, and I test to see if the cursor ray intersects them.

Picking the axis is straightforward, but things get more complicated once dragging begins. Although I know which axis the user started dragging, in order to actually move things properly, I have to have a way of computing exactly how far they have dragged the object along the selected axis. Intuitively you might think well, why not just extend the box used to pick the axis to be infinitely long along the dragging axis, and continue intersecting with it as the user moves the cursor? That’s not a bad idea, and it would work, but it isn’t the best solution.

The reason is because it doesn’t feel very good to use. Although it will feel fine if the user drags right along the axis in question, a lot of times users don’t do that. They tend to drag erratically, and pay more attention to the object’s position than how closely they follow the line implied by the axis. This quickly moves the cursor off the imaginary box entirely, so the ray implied by the cursor won’t intersect it. This causes the manipulator to stop working until the user moves the cursor back onto the axis, resulting in a sticky and unpleasant user interaction.

What we’d rather have happen in this case is for the dragging to happen as if the user had moved the mouse to the point on the axis closest to where they actually dragged, even when they’ve wandered quite far from the axis itself. To see how to solve this problem geometrically, imagine a user dragging the mouse along the X axis of a 2D plane. If the user starts dragging erratically, and moves along Y as well, their cursor will end up out in the middle of the plane somewhere. Figuring out where they would have been on the X axis is quite simple, though: ignore the Y coordinate, and just use the X coordinate. Nothing could be simpler.

But how do we do this operation for an arbitrary 3D axis and a ray extending from the cursor? It turns out you can do it exactly the same way. All you have to do is pick a 3D plane that happens to contain the dragging axis, then intersect the cursor ray with that plane. That gives you a nice 2D coordinate on a 2D plane, where the dragging axis is analogous to the X axis in the simple example. You then “throw out the Y”, and are left with a drag that always feels nice in 3D, sticks the object tightly to the cursor, and does not require the user to drag close to the axis.

You don’t need any fancy math to do this. It’s entirely constructible with simple operations available in any math library: dot product, cross product, and ray-intersects-plane, all three of which were already available in The Witness codebase.

So my translation manipulator was built entirely out of the most basic 3D operations in any math library. Yet somehow it was exhibiting very odd behavior atypical for a simple system. How could this be? What was I missing?

Debug Rendering

My first thought was that I must be picking the dragging plane poorly. Constructing planes in 3D with only partial information is always a little dicey; you need three non-collinear points to define a plane, but often you want to construct one with only one or two points. The translation manipulator is a perfect example: it needs a plane to operate, but it only really has a line to work with, which is equivalent to only two points. There are, in fact, an infinite number of planes that pass through the dragging axis of the translation manipulator. It’s much like a waterwheel: the dragging axis is the center axle, and each blade of the wheel is another valid choice for a plane.

So how do you pick one? The best method I’ve found for translation manipulators is to use the location of the user. Since we want the user to be able to drag around on this plane easily, it makes sense to say that, of all the planes containing the dragging axis, the best one is the one that is most visible to them. This avoids the potentially bad cases you can hit if you pick something independent of the user’s location, such as the resulting plane being edge-on or nearly edge-on so that the user cannot actually drag along it effectively.

Since even simple 3D math like this can be difficult to verify by inspection alone, my first inclination was to add some debugging rendering to verify that I was picking the dragging plane properly. Since the camera angle clearly mattered, and the plane was one of the only things dependent on that angle, it seemed like a likely culprit. So I tried drawing the normal to the plane in yellow at the base of the manipulator:

Even in the cases where the dragging failed, the plane normal looked right. Maybe the positioning of the plane was wrong? I tried computing the location of the plane directly, by moving along the normal by the plane constant d and drawing the normal there. This, of course, put the normal nowhere near the manipulator, so I had to look around for it (the plane equation gives you the point closest to the origin, which has no real relationship to where the manipulator might be, other than that they both lie on the same infinite plane). But once I found it, even though it was far away, looking back toward the manipulator it still did seem like it was the right plane.

Next I moved onto the intersections. I tried drawing the intersection point that I had computed when picking the axis in the first place, and also the point that I used to compute the plane constant in the first place. Both looked correct.

But then I tried drawing the actual intersection computed at each drag update, and that didn’t look correct. In fact, it didn’t really “look” at all. Although I was specifically telling the renderer to draw a white sphere where I’d found the intersection of the cursor line with the plane, no white sphere appeared. I checked the code again, and it looked correct, so I tried doing a few more test drags, this time from angles that had seemed to be working before. Now, the sphere showed up, although it was bigger than I thought it should be, which was odd.

I tried rotating the camera. I wrote the code so as to leave the sphere rendering wherever the last dragging intersection occurred, so if I stopped dragging, it just stayed where it was. This allowed me to rotate the camera and see precisely where the sphere was located, and as I did, I realized that the reason the sphere appeared too large was because it wasn’t even close to being on the plane I thought I was using. It was much closer to the camera. Weirder still was that, at the buggier camera angles, the sphere was jumping around erratically, and wasn’t really sticking to the cursor, which it always should do no matter what plane was being used. I was, after all, intersecting the cursor line with a plane. How could the intersection point possibly lie anywhere but directly under the cursor, regardless of the parameters of the plane?

It was at that moment that my math brain finally woke up. It had been sleeping for about a decade, which is how long it’d been since I’d done any real mathematical programming. In an instant, I immediately knew both what the problem was and how to fix it.

Always Look Behind You

The problem was embarrassingly obvious when you consider the clues I’d been generously given and yet completely ignored. Remember when I said I drew the plane normal at the location indicated by the plane constant, and I had to look far away at the manipulator to see if it looked like it was in line with the plane? Well if the generally erratic behavior of the manipulator hadn’t been telling enough, that little episode should have been the dead giveaway.

At this point, every serious 3D programmer who hasn’t been doing other work for ten years surely knows what the bug was. But for the sake of people who are less experienced, I decided to end this article with a bit of a cliffhanger.

I am going to tell you that you can find the bug by looking at this simplified version of the ray_vs_plane function in The Witness that I was calling (the real version does some epsilon checking in case the ray and plane are parallel, but otherwise it is identical):

And I will also show you this screenshot of what it looks like if you turn around 180 degrees from the rock and look back at the island itself:

But, until next time, I’m not going to tell you what the bug actually is. Can you find it, and figure out how to fix it, before then?